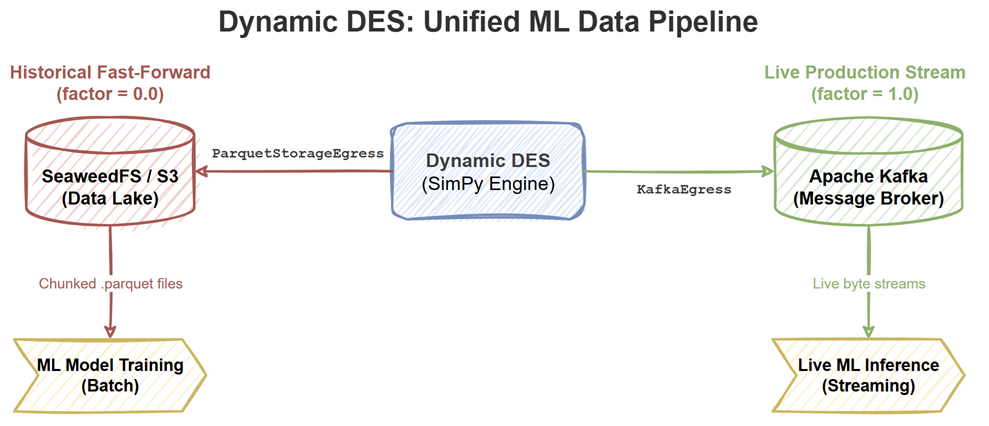

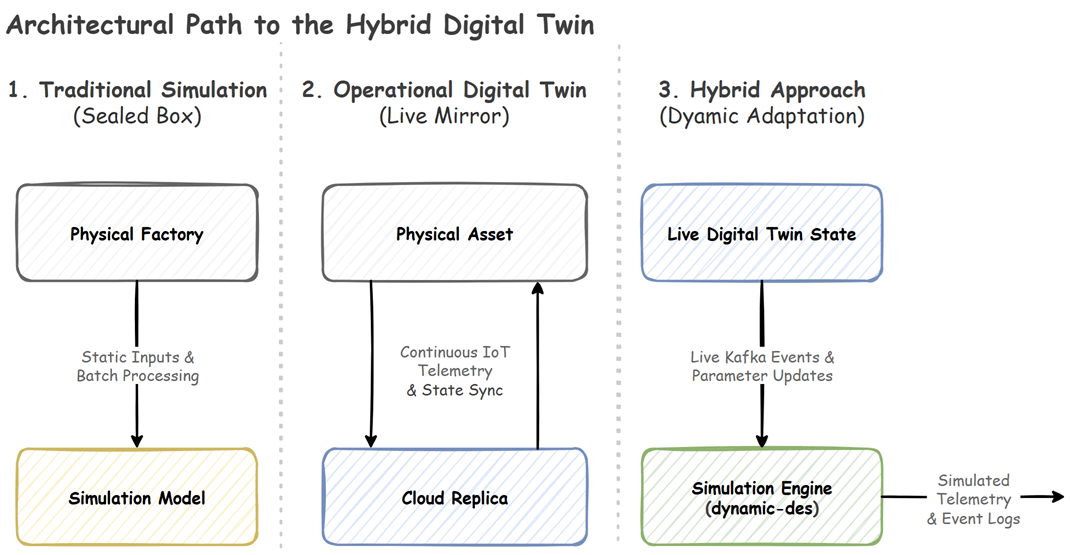

Dynamic DES v0.8.1 introduces native Data Lake integration. Learn how to use a single SimPy codebase to generate batch Parquet data for ML training, and seamlessly transition to streaming live Kafka events for production inference.

Dynamic DES v0.8.1 introduces native Data Lake integration. Learn how to use a single SimPy codebase to generate batch Parquet data for ML training, and seamlessly transition to streaming live Kafka events for production inference.

A comprehensive walkthrough from my session at Current London 2026 on capturing and visualizing data lineage across a production-style data stack.

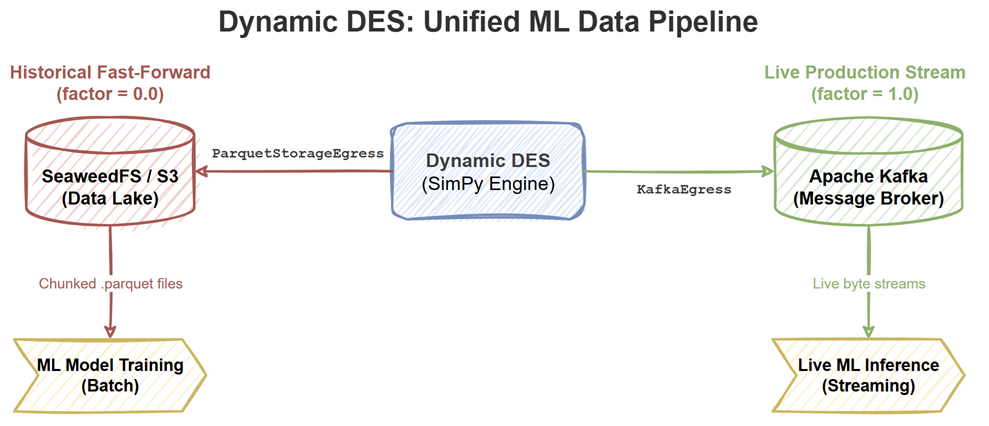

In Part 2 of our series, we dive into the code and architecture of dynamic-des. Learn how to use the Switchboard pattern, mutable resources, and dynamic topic routing to transform a static model into a synchronized forecasting engine.

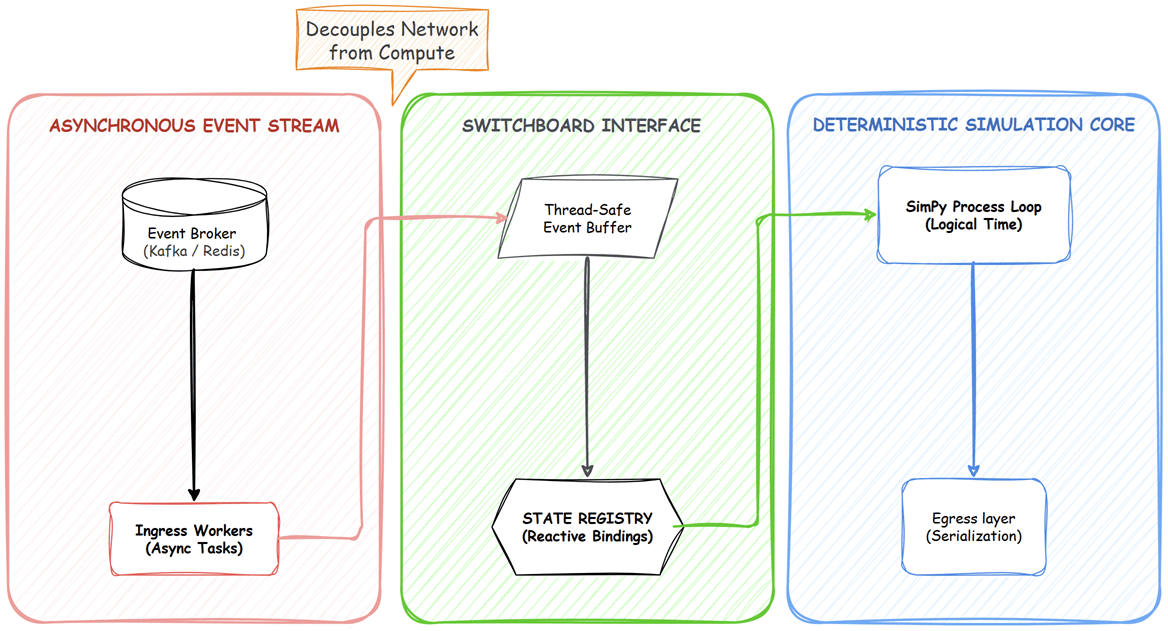

Many systems marketed as digital twins exist in an ambiguous middle ground. We look at the architectural layers separating traditional simulations, operational twins, and event-driven hybrid pipelines.

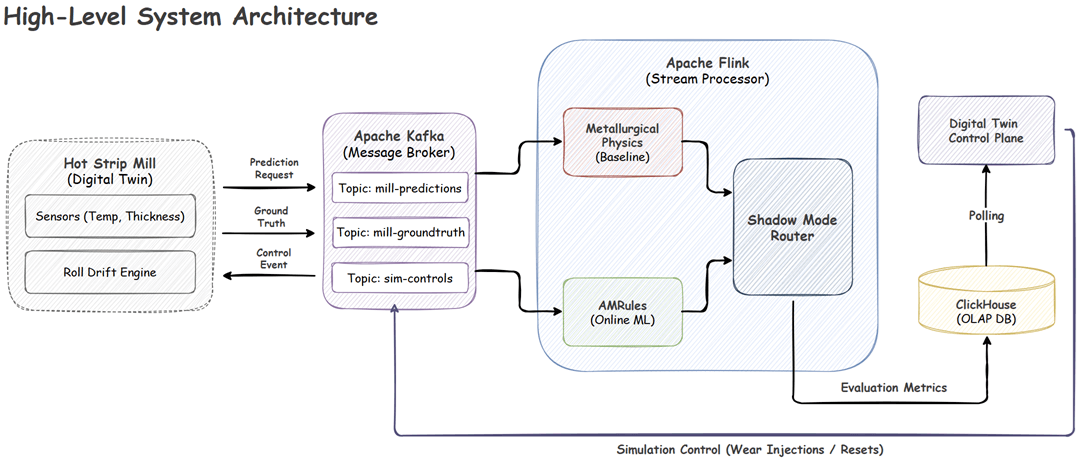

Discover how to build a fault-tolerant streaming architecture using Apache Flink and Kotlin. This guide demonstrates applying Online Machine Learning to autonomously detect concept drift and correct for physical machinery wear in real-time, which is safely managed by a deterministic Shadow Mode router.

For a long time, I wanted a way to host my technical presentations directly on my website without relying on external platforms or bulky PDF exports. I wanted a “Slides as Code” approach: version-controlled Markdown files that live natively alongside my blog posts.

In Part 1, we built a contextual bandit prototype using Python and Mab2Rec. While effective for testing algorithms locally, a monolithic script cannot handle production scale. Real-world recommendation systems require low-latency inference for users and high-throughput training for model updates.

This post demonstrates how to decouple these concerns using an event-driven architecture with Apache Flink, Kafka, and Redis.

Traditional recommendation systems often struggle with cold-start users and with incorporating immediate contextual signals. In contrast, Contextual Multi-Armed Bandits, or CMAB, learn continuously in an online setting by balancing exploration and exploitation using real-time context. In Part 1, we develop a Python prototype that simulates user behavior and validates the algorithm, establishing a foundation for scalable, real-time recommendation systems.

A couple of years ago, I read Stream Processing with Apache Flink and worked through the examples using PyFlink. While the book offered a solid introduction to Flink, I frequently hit limitations with the Python API, as many features from the book weren’t supported. This time, I decided to revisit the material, but using Kotlin. The experience has been much more rewarding and fun.

In porting the examples to Kotlin, I also took the opportunity to align the code with modern Flink practices. The complete source for this post is available in the stream-processing-with-flink directory of the flink-demos GitHub repository.

The standard architecture for modern web applications involves a decoupled frontend, typically built with a JavaScript framework, and a backend API. This pattern is powerful but introduces complexity in managing two separate codebases, development environments, and the API contract between them.

This article explores an alternative approach: an integrated architecture where the backend API and the frontend UI are served from a single, cohesive Python application.