Beyond CAD Models

There is a project by Dassault Systèmes called the Living Heart that illustrates the trajectory of this technology. Instead of relying on standard 2D scans, surgeons can pull up a high-fidelity 3D model of a patient’s heart that simulates blood flow, mechanics, and electricity based on imaging-derived reconstructions and population-based physiological calibration.

While the Living Heart is closer to a personalized, highly-parameterized simulation than a continuously streaming IoT system, it highlights the core philosophy of a modern digital twin: moving past static CAD files to create models that are fundamentally aligned with a specific, real-world physical instance.

Industry Applications

While manufacturing initially drove this technology, digital twins, systems that periodically or continuously align a digital model with physical reality, exist on a wide spectrum. Some are event-driven, some are periodically synchronized, and others are simulation-first with occasional calibration.

- Smart Manufacturing: On automated factory floors, robotic arms stream telemetry to virtual models. This allows plant managers to track maintenance needs and test routing scenarios based on the current state of the factory.

- Supply Chain and Logistics: Global supply chains use digital twins to track everything from warehouse inventory levels to fleet movements, achieving end-to-end visibility.

- Cybersecurity and Infrastructure: Network digital twins are increasingly used to replicate network topology and traffic models. Security teams use these digital replicas as testbeds to safely test rate-limiting and AI behavioral rules against simulated DDoS attacks without risking live infrastructure.

- Next-Generation Telecommunications: Telecom operators build digital twins of their networks to manage dynamic bandwidth allocation and network slicing, analyzing traffic spikes across thousands of nodes before they cause outages.

Reality Gap: Simulations vs. Digital Twins

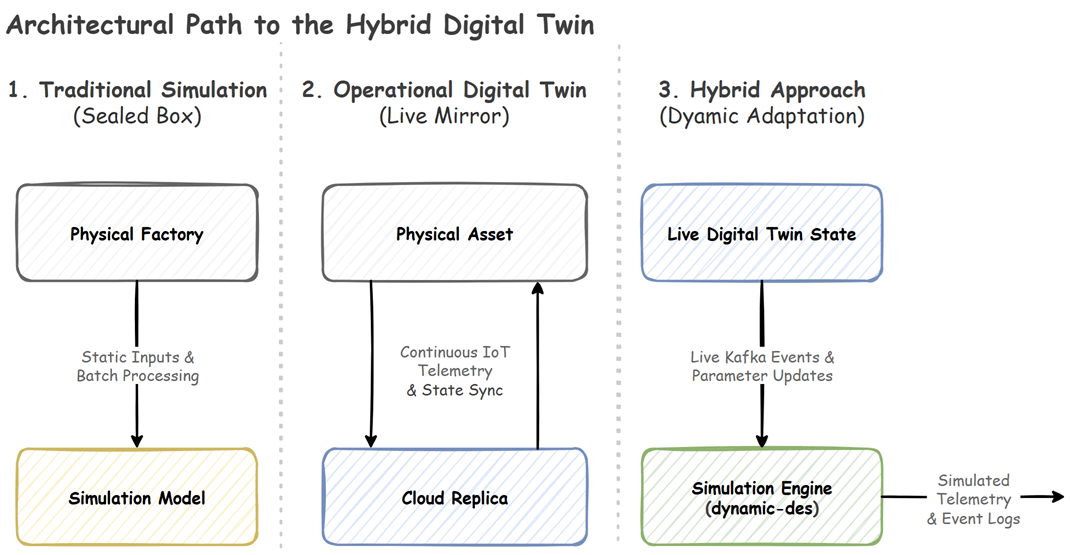

The tech industry frequently uses the terms “simulation” and “digital twin” interchangeably. Clarifying how these systems handle state and time is critical for evaluating modern control architectures. To understand this practically, let’s look at a factory floor where “Machine B” suddenly breaks down.

Here is how different architectural patterns handle that exact event:

1. Traditional Simulation (Isolated Execution)

A simulation is a predictive model designed to explore “what if” scenarios.

- Scenario: On Monday morning, an engineer runs a Discrete-Event Simulation (DES) of the factory. On Tuesday, Machine B physically breaks.

- Limitation: A traditional simulation run is an isolated execution model. Unless the system is built to re-seed its parameters from a database and trigger a new batch run, the ongoing execution remains unaware of the real-world breakdown. It will incorrectly continue assuming Machine B is running at full capacity.

2. Operational Digital Twin (State Synchronization)

An operational digital twin extends a model by aligning it with reality using time-series databases and IoT connectivity.

- Scenario: The moment Machine B breaks, sensors ping the database. The twin immediately updates the machine’s live status to “Offline.”

- Limitation: In practice, a digital twin is usually a stack of overlapping layers. The core synchronization layer provides state fidelity, while separate analytics layers handle forecasting. However, if a system only utilizes the synchronization layer as a dashboard without integrating predictive models, it often relies on separate analytics modules, external simulation tools, or human operators to forecast future cascading consequences.

3. Event-Driven Hybrid Execution (Dynamic Adaptation)

Advanced architectures blend these layers into unified ecosystems, feeding real-world states directly into predictive engines. While many systems accomplish this through windowed re-execution or micro-batch propagation, another opinionated architectural pattern is event-driven mutation.

- Scenario: The operational twin registers the breakdown and emits a live Kafka event. A background hybrid simulation engine ingests that event while it is actively running. It dynamically mutates Machine B’s capacity parameter to zero and recalculates its logic on the fly. It immediately outputs new telemetry: “Machine B’s queue is backing up, routing logic must shift, and a bottleneck will form at Machine C in exactly 45 minutes.”

Upgraded Simulation Fallacy

Because of overlapping definitions, organizations often fall into what we might call the “Upgraded Simulation Fallacy”. They purchase standard simulation software, attach a 3D dashboard, and market the project as a digital twin. If the system lacks the data pipelines to periodically align with live operational states, it sits in an ambiguous middle zone, delivering some monitoring value, but falling short of a fully integrated twin ecosystem.

Transitioning to an operational twin introduces strict engineering realities:

- Cost and Complexity: Building them requires heavy investments in sensors, edge computing, and complex data architecture.

- Data Pipelines and Standardization: Getting disparate sensors to speak the same language and pipe into a central engine requires robust schemas (like Pydantic, Avro, or Protobuf).

This last point is where many hybrid projects stall. If you naively force real-time asynchronous updates directly into a standard simulation loop, the engine’s internal clock can bottleneck while waiting for network requests. To avoid this design anti-pattern, teams must explicitly decouple their network I/O from the simulation execution or rely on rolling micro-batches.

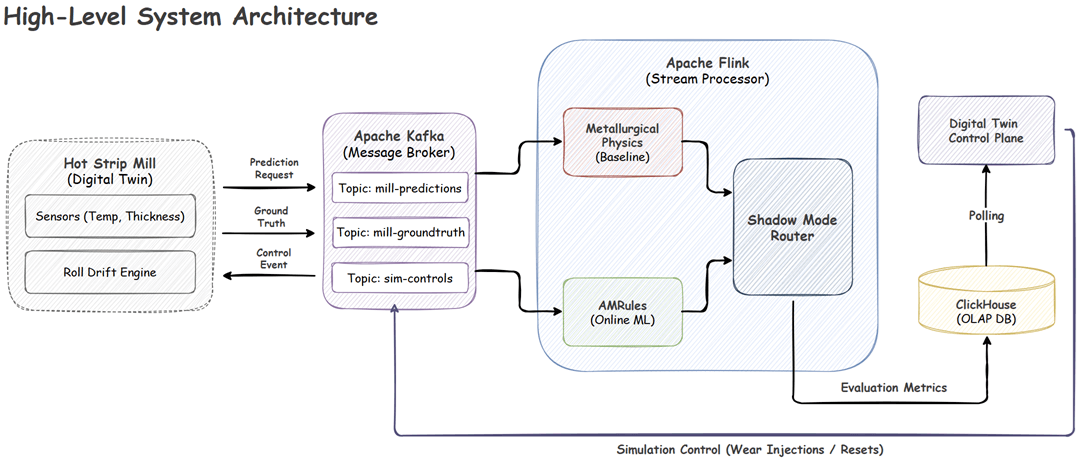

Taking Control with dynamic-des

Organizations shouldn’t have to choose between an isolated simulation and an operational dashboard. The goal is to combine them, running “what-if” forecasts against synchronized, real-world states.

But if you want to implement an event-driven architecture where a running mathematical model ingests Kafka streams and alters its parameters on the fly without stopping, you face a complex I/O challenge.

One purpose-built approach to solving this specific pattern is the dynamic-des package. In Part 2 of this series, we will look at how this open-source tool uses a Switchboard pattern and dynamic mutable resources to turn a static SimPy script into a synchronized, event-driven engine.

Comments