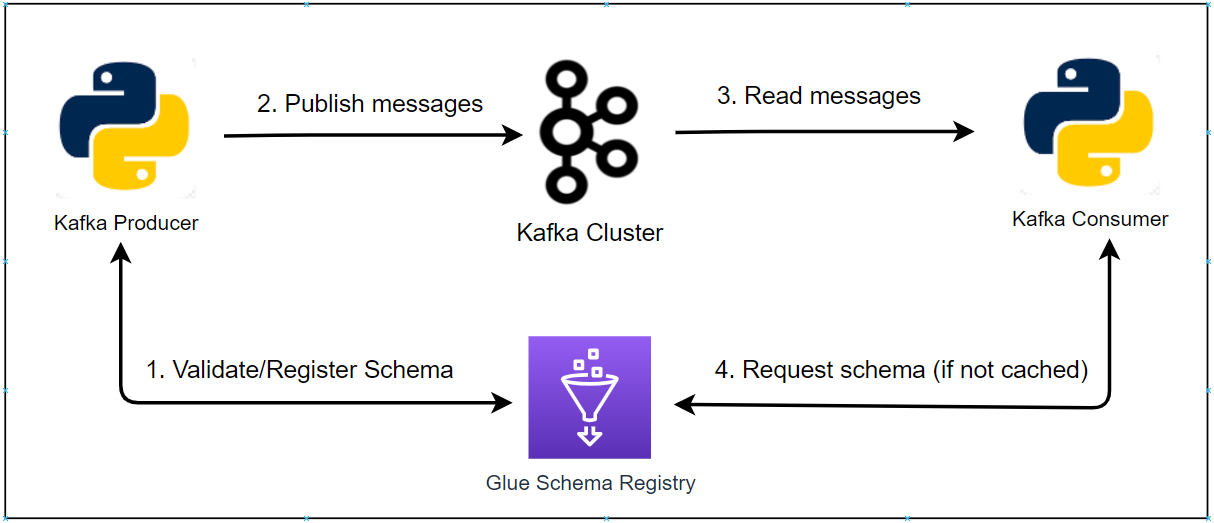

In the previous posts, we discussed how to implement client authentication by TLS (SSL or TLS/SSL) and SASL authentication. One of the key benefits of client authentication is achieving user access control. In this post, we will discuss how to configure Kafka authorization with Java and Python client examples while SASL is kept for client authentication.