A comprehensive walkthrough from my session at Current London 2026 on capturing and visualizing data lineage across a production-style data stack.

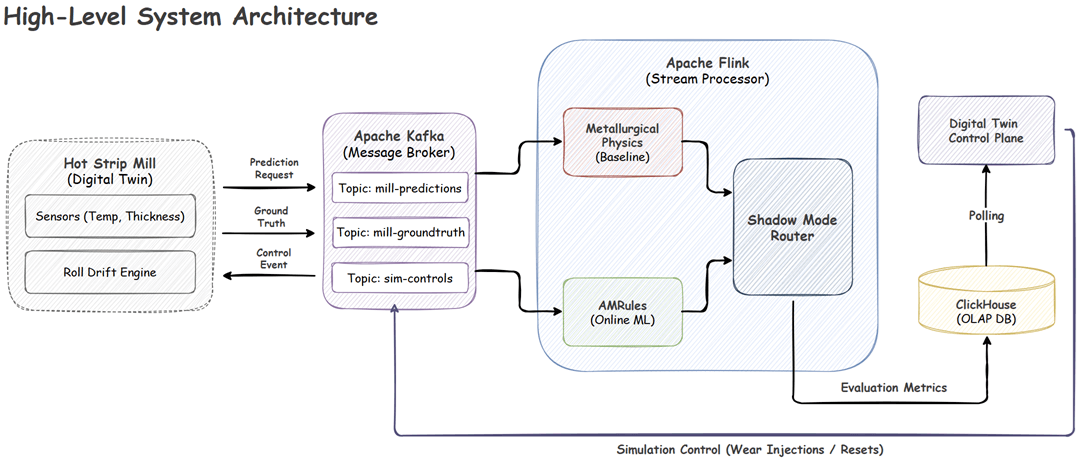

Discover how to build a fault-tolerant streaming architecture using Apache Flink and Kotlin. This guide demonstrates applying Online Machine Learning to autonomously detect concept drift and correct for physical machinery wear in real-time, which is safely managed by a deterministic Shadow Mode router.

In Part 1, we built a contextual bandit prototype using Python and Mab2Rec. While effective for testing algorithms locally, a monolithic script cannot handle production scale. Real-world recommendation systems require low-latency inference for users and high-throughput training for model updates.

This post demonstrates how to decouple these concerns using an event-driven architecture with Apache Flink, Kafka, and Redis.

A couple of years ago, I read Stream Processing with Apache Flink and worked through the examples using PyFlink. While the book offered a solid introduction to Flink, I frequently hit limitations with the Python API, as many features from the book weren’t supported. This time, I decided to revisit the material, but using Kotlin. The experience has been much more rewarding and fun.

In porting the examples to Kotlin, I also took the opportunity to align the code with modern Flink practices. The complete source for this post is available in the stream-processing-with-flink directory of the flink-demos GitHub repository.

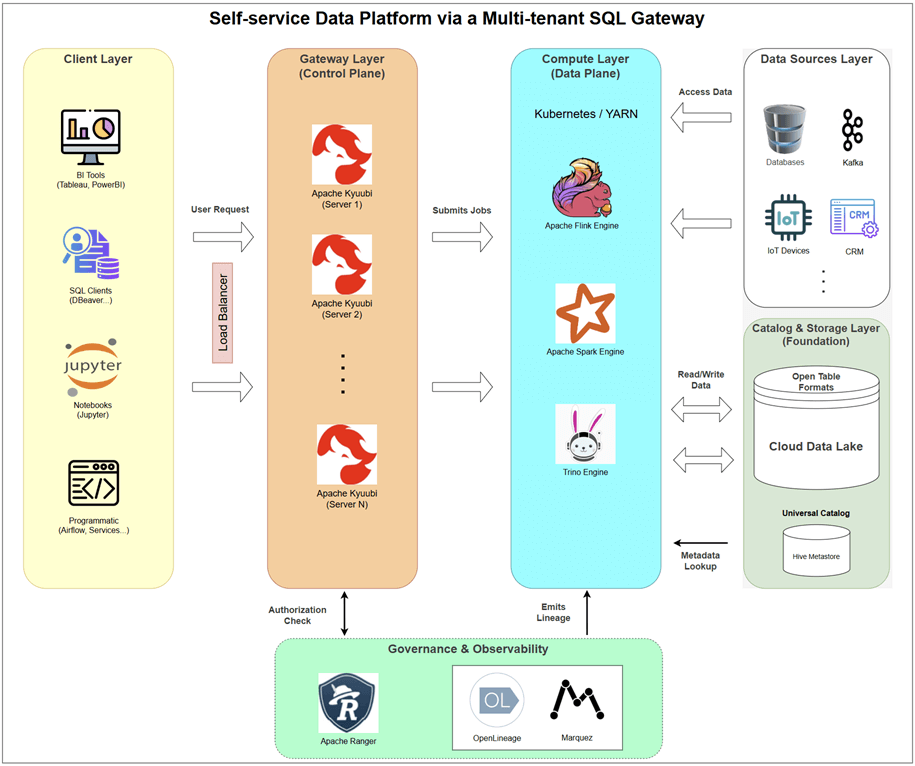

Providing direct access to big data engines like Spark and Flink often creates chaos. A gateway-centric architecture solves this by introducing a robust control plane. This article presents a detailed blueprint using Apache Kyuubi, a multi-tenant SQL gateway, to provision and manage on-demand Spark, Flink, and Trino engines. Learn how this model delivers true self-service analytics with centralized governance, finally resolving the conflict between user empowerment and platform stability.

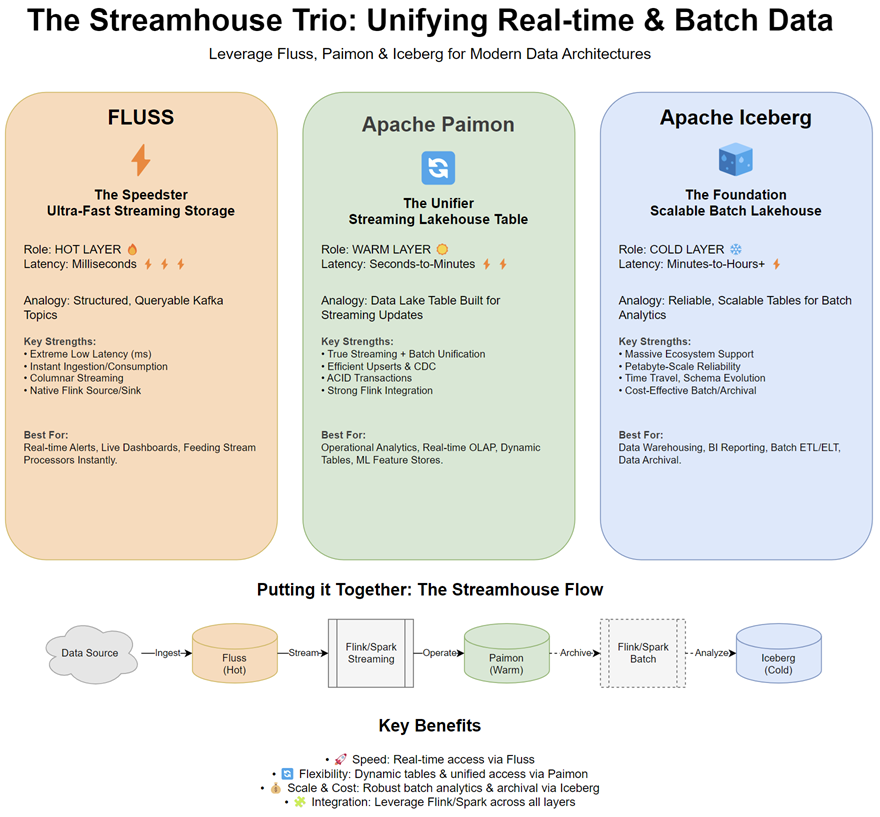

The world of data is converging. The traditional divide between batch processing for historical analytics and stream processing for real-time insights is becoming increasingly blurry. Businesses demand architectures that handle both seamlessly. Enter the “Streamhouse” - an evolution of the Lakehouse concept, designed with streaming as a first-class citizen.

Today, we’ll introduce three key open-source technologies shaping this space: Apache Paimon™, Fluss, and Apache Iceberg. While each has unique strengths, their true power lies in how they can be integrated to build robust, flexible, and performant data platforms.

The Flink SQL Cookbook by Ververica is a hands-on, example-rich guide to mastering Apache Flink SQL for real-time stream processing. It offers a wide range of self-contained recipes, from basic queries and table operations to more advanced use cases like windowed aggregations, complex joins, user-defined functions (UDFs), and pattern detection. These examples are designed to be run on the Ververica Platform, and as such, the cookbook doesn’t include instructions for setting up a Flink cluster.

To help you run these recipes locally and explore Flink SQL without external dependencies, this post walks through setting up a fully functional local Flink cluster using Docker Compose. With this setup, you can experiment with the cookbook examples right on your machine.

In Part 9, we developed two Apache Beam pipelines using Splittable DoFn (SDF). One of them is a batch file reader, which reads a list of files in an input folder followed by processing them in parallel. We can extend the I/O connector so that, instead of listing files once at the beginning, it scans an input folder periodically for new files and processes whenever new files are created in the folder. The techniques used in this post can be quite useful as they can be applied to developing I/O connectors that target other unbounded (or streaming) data sources (eg Kafka) using the Python SDK.

A Splittable DoFn (SDF) is a generalization of a DoFn that enables Apache Beam developers to create modular and composable I/O components. Also, it can be applied in advanced non-I/O scenarios such as Monte Carlo simulation. In this post, we develop two Apache Beam pipelines. The first pipeline is an I/O connector, and it reads a list of files in a folder followed by processing each of the file objects in parallel. The second pipeline estimates the value of $\pi$ by performing Monte Carlo simulation.

In Part 3, we developed a Beam pipeline that tracks sport activities of users and outputs their speeds periodically. While reporting such values is useful for users on its own, we can provide more engaging information to users if we have a pipeline that reports pacing of their activities over periods. For example, we can send a message to encourage a user to work harder if he/she has a performance goal and is underperforming for some periods. In this post, we develop a new pipeline that tracks user activities and reports pacing details by comparing short term metrics to their long term counterparts.