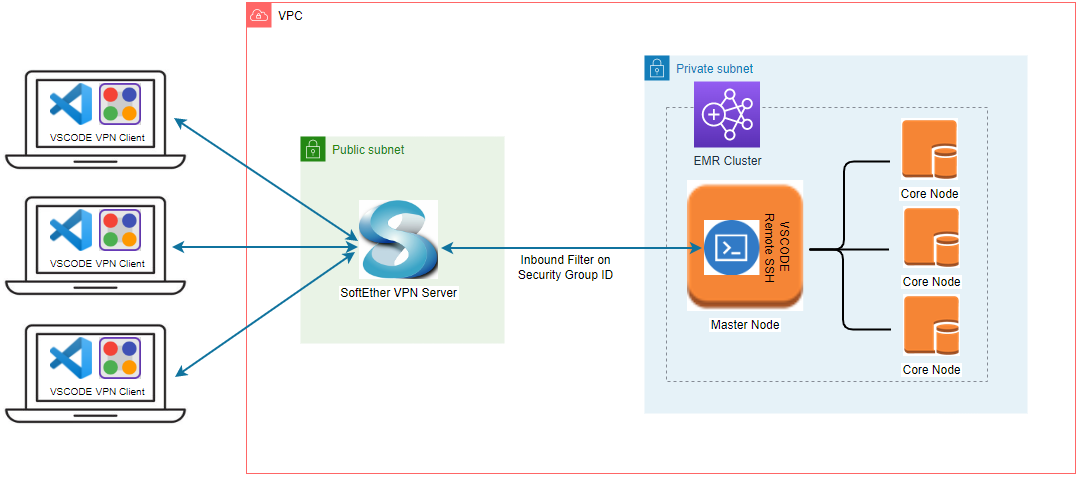

We will discuss how to set up a remote dev environment on an EMR cluster deployed in a private subnet with VPN and the VS Code remote SSH extension. Typical Spark development examples will be illustrated while sharing the cluster with multiple users. Overall it brings an effective way of developing Spark apps on EMR, which improves developer experience significantly.